Sunday, June 17. 2012

Couchbase Manager for Glassfish: Sticky Access

Two posts ago the chouchbase-manager for glassfish was presented and the previous one showed some numbers in a two glassfish server cluster. The conclusion was that a special sticky configuration was needed to transform the manager into a competitive option. From now on it manages a sticky property that, when set, makes the implementation to interact much less with the couchbase server.

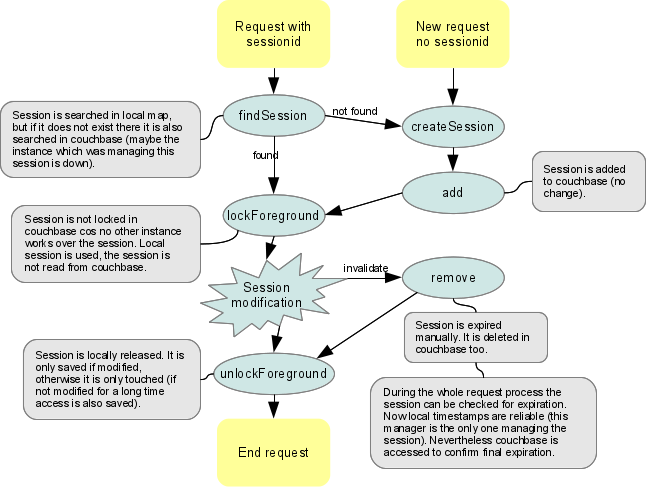

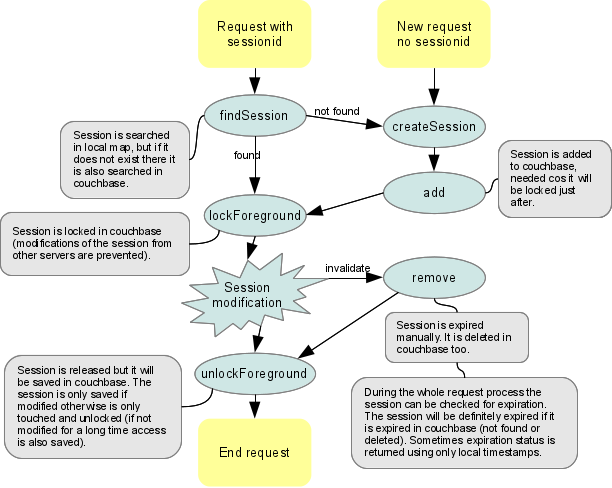

A sticky configuration means that the balancer element always sends all the request for the same session to the same glassfish server. Using mod_jk this is done cos glassfish adds a special tag to the JSESSIONID which indicates the server that is attending the session, the tag is called jvmRoute. So the first access of the client (no cookie is set) is distributed to any of the all possible servers but the rest of them (JESESSIONID cookie marks the server) are sent against the same glassfish instance. This configuration assures that all the requests for a session are going to be processed by the same server and, therefore, a lot of couchbase operations could be saved. I am going to show the same diagram I presented before but using sticky processing.

As before the first method to be called is findSession (if the session was already created), the session is searched in the local map. If found that session is returned, if not found the session is got from couchbase. This method is more or less the same cos maybe the instance that was processing the session is down and the balancer is redirecting the request to a new server. But although the procedure is the same you have to realize that in sticky configuration the session is never read from couchbase again (in non-sticky the session is read from couchbase as many times as different servers are accessed for the first time). If the session is new the AppServer calls the createSession method. Sticky setup does the same process, the session is created locally and in couchbase.

When the session is requested to be locked (lockForeground) no access to couchbase is done. In non-sticky the session had to be locked in couchbase (preventing modifications for any other instance) but now sticky configuration assures that no other server is going to modify the same session, so the lock is done only locally (internal JVM). This part is the main improvement.

Session is managed by the JavaEE application.

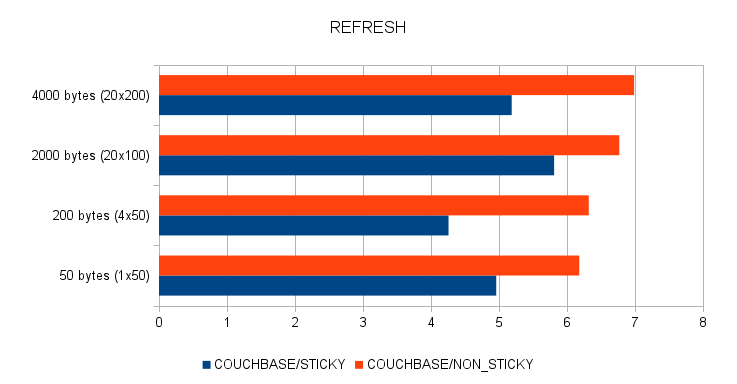

Same as before if the session is dirty (modified by the application) it is saved in couchbase and if only accessed it is touched (refreshing expiration). In this step no big change is performed but in refresh only one method is executed (remember that couchbase has no touchAndUnlock and that meant two calls were needed in non-sticky when refreshing). Same as in non-sticky if the session is only accessed for a long time a save is forced. Cos the session is re-used the attributes are never cleared (in non-sticky attributes are cleared to save space and to check if the solution worked).

Deletion and expiration are managed just in the same way. Local timestamps are managed but a final call against couchbase is performed to check that the session is finally expired in the repo. The difference here is that now the sticky configuration assures that the local timestamps are true (cos there is no access from other instance, the local timestamps are the correct ones for sure). The repository check for expiration could even be saved (cos now timestamps are valid) but I preferred to only trust in couchbase, besides this call is only done a few times (normally just once) per session life-cycle.

So the idea is quite simple, sticky configuration improves the performance cos the manager can trust that the session is only being modified by that instance. So in normal update or refresh operation only one operation against couchbase is done (set or touch) and, in general, one operation is always saved (in the four operations the first getAndLock is not necessary). Besides this setup does not perform any lock/unlock operation in couchbase, operations which, presumably, are more expensive. Sticky solution guarantees High Availability, if a glassfish instance goes down the balancer redirects its requests to another instance and the new instance will recover the session from couchbase. Obviously this solution does not work in non-sticky balancer configuration. One final comment, couchbase does not have a set operation that returns a cas (cas is only returned in get ops), it would be nice a kind of sets operation that would save the object and return the new cas. Cos there is not such operation, no cas is used in sticky configuration.

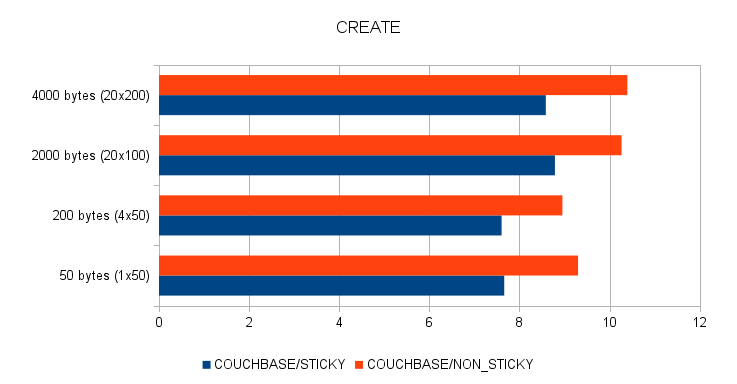

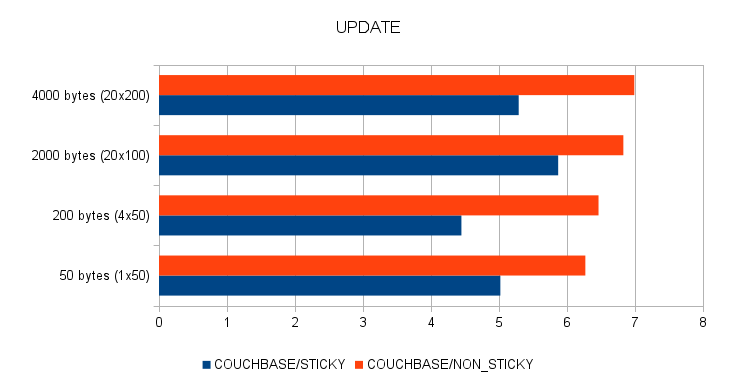

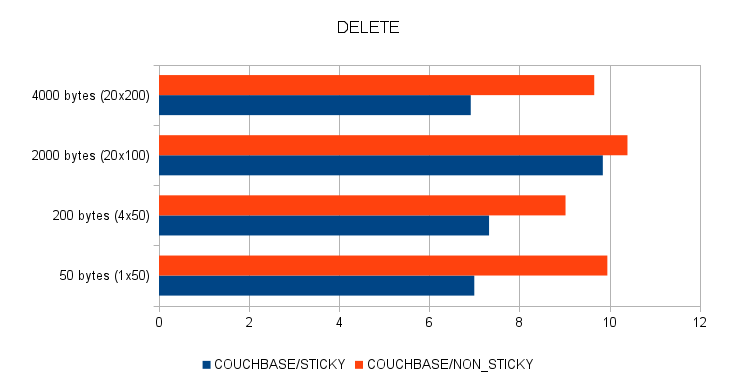

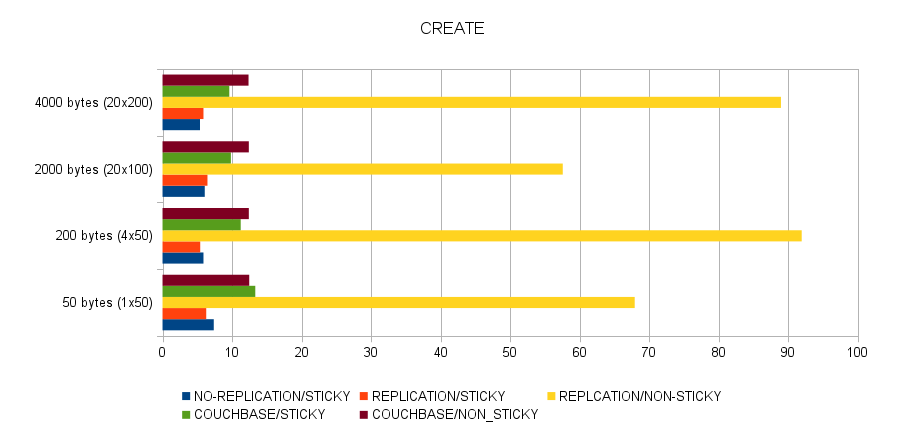

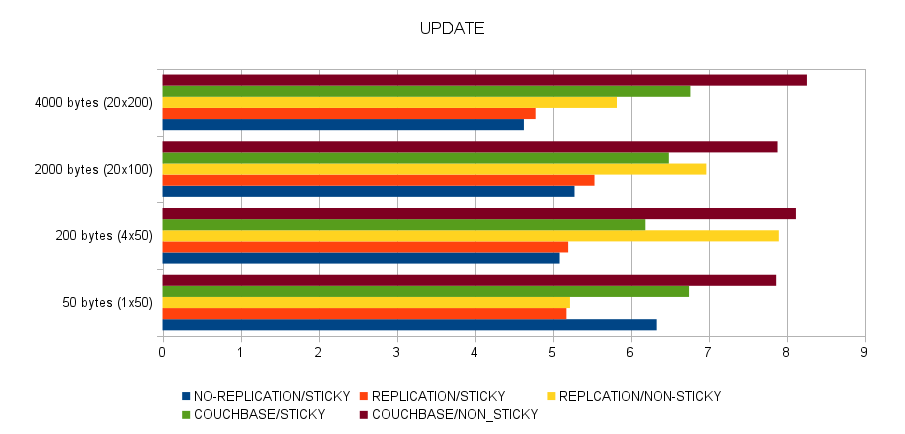

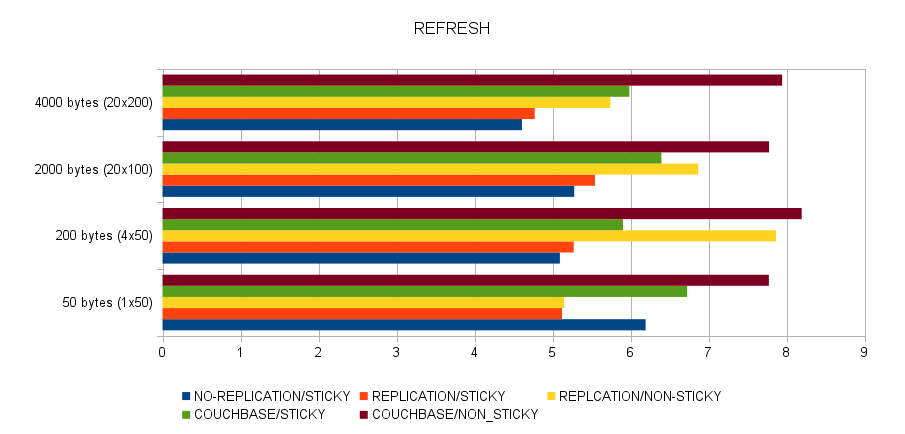

After the proper code changes I performed the same tests that were done in the previous post but just using sticky and non-sticky couchbase manager setups (again the sheet is here). I do not know why, times this time are a little better (even non-sticky configuration gets better performance) but what it is clear is that sticky improves the solution a lot.

My personal conclusion is that sticky configuration is as fast as a replicated solution, but with the enormous advantage of only storing in the internal map the sessions each instance is managing (in replication, all sessions are stored in all instances). In order to improve the non-sticky solution I have a new idea (but this will be commented in a new entry). But take in mind that the cost of non-sticky will never be zero. Besides I would need some collaboration from couchbase guys, as I said before, times would be better if some methods were implemented (touchAndUnlock and unlockAndDelete) and if buckets were more configurable (no disk storing for example). I think the couchbase-manager is ready to be presented, I will try to clean up the whole solution, document installation and configuration, manage errors correctly and I also need to decide the project license (I am thinking to distribute it only with GPLv2 with the classpath exception but comments are welcome, I have no idea about licenses).

Cheerio!

Saturday, May 19. 2012

Couchbase Manager for Glassfish: More Tests

I am continuing with my little coubase-manager project. Following the ideas that I already commented in the previous entry, I have setup a two KVM debian boxes with a two-instance glassfish cluster, both instances access to a single couchbase installed in my laptop. Besides each glassfish is configured with a JK enabled listener that is balanced via jk-workers and Apache. First debian box manages a non-sticky apache, the second one a sticky configuration. So in summary the deployment is very similar to the one shown in the simple HA setup entry but using 3.1.2 version. My first surprise was that the manager works smoothly against a balanced cluster, it is incredible when something works at first time.

I developed a JAX-WS WebService application which manages the Java session (here it is the netbeans project for client and server). The main idea behind that application is testing the manager and check some times. The application has four operations: create (a new session is added to the server), update (one attribute in the session is modified), refresh (the session is only read but not changed) and delete (session is invalidated). The attributes added to the session are byte arrays of a specified size. The web services are called by a little multi-thread client which can emulate a typical web user. The client starts several threads (different users) which create a new session, perform several updates and refreshes and, finally, delete it. The update and refresh part is executed again by a second layer of threads (children threads), this second layer performs the operations over the same session (parallel modifications). Both threads (parent and children) run for a specified number of iterations. At the end the mean and standard deviation for every operation time are displayed. The command has several arguments that let me test different aspects of the manager.

$ java -cp . es.rickyepoderi.managertest.client.Test -h Unknown option: -h java es.rickyepoderi.managertest.client.Test [-option [value]] ... Options: -b: Base URL for the WSDL (default: http://localhost:8080/manager-test/SessionTest?wsdl) -n: Namespace of the WSDL (default: http://server.managertest.rickyepoderi.es/) -l: Local part of the WSDL (default: SessionTest) -t: Number of threads (default: 1) -ct: Number of children threads per parent thread (default: 1) -a: Number of attributes in session (default: 10) -s: Size of each attribute in bytes (default: 100) -ur: Ratio (percentage) of updates 0-100 (default: 50) -os: Sleep time inside operation in ms (default: 0) -ts: Sleep time inside thread between operation in ms (default: 0) -i: Number of iterations (default: 1) -ci: Number of iterations/operations for each parent iteration (default: 1) -d: Show debug for threads (default: false)

The first interesting thing that I tested was the locking part. When executing the client with two inner threads (the -ct option creates the commented second level of threads that handle the same session, so session is accessed in parallel by all of them) in the sticky Apache times were uniform:

$ java -cp . es.rickyepoderi.managertest.client.Test \ -b "http://192.168.122.22/manager-test/SessionTest?wsdl" \ -ct 2 -ci 5 -ts 100 -d 11:32:33.504 (ExecutorParent-8|null): CREATE res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=42 11:32:33.548 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=10 11:32:33.548 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=11 11:32:33.658 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=17 11:32:33.660 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=32 11:32:33.776 (ExecutorParent-8|ExecutorChild-11): UPDATE res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=12 11:32:33.792 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=13 11:32:33.888 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=12 11:32:33.905 (ExecutorParent-8|ExecutorChild-12): UPDATE res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=11 11:32:34.000 (ExecutorParent-8|ExecutorChild-11): UPDATE res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=11 11:32:34.017 (ExecutorParent-8|ExecutorChild-12): UPDATE res=debian1:8d85190ffdf0b9bbb88f61cc45e4 time=10 11:32:34.128 (ExecutorParent-8|null): DELETE res=debian1 time=30 ERRORS: 0 CREATE count: 1.0 mean: 42.0 dev: 0.0 UPDATE count: 4.0 mean: 11.0 dev: 0.7071067811865476 REFRESH count: 6.0 mean: 15.833333333333334 dev: 7.559026980299042 DELETE count: 1.0 mean: 30.0 dev: 0.0

If you see all operations (update and refresh) are just around 10ms (standard deviation is low). But when executing the multi-thread client against the non-sticky server, one execution time is crazy:

$ java -cp . es.rickyepoderi.managertest.client.Test \ -b "http://192.168.122.21/manager-test/SessionTest?wsdl" \ -ct 2 -ci 5 -ts 100 -d 11:32:55.160 (ExecutorParent-8|null): CREATE res=debian2:8d8a4e2efa6998e424a432e9d4e9 time=45 11:32:55.208 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian2:8d8a4e2efa6998e424a432e9d4e9 time=15 11:32:55.324 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian1:8d8a4e2efa6998e424a432e9d4e9 time=10 11:32:55.434 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian2:8d8a4e2efa6998e424a432e9d4e9 time=11 11:32:55.546 (ExecutorParent-8|ExecutorChild-11): REFRESH res=debian1:8d8a4e2efa6998e424a432e9d4e9 time=12 11:32:55.208 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian1:8d8a4e2efa6998e424a432e9d4e9 time=416 11:32:55.659 (ExecutorParent-8|ExecutorChild-11): UPDATE res=debian2:8d8a4e2efa6998e424a432e9d4e9 time=12 11:32:55.726 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian1:8d8a4e2efa6998e424a432e9d4e9 time=11 11:32:55.838 (ExecutorParent-8|ExecutorChild-12): UPDATE res=debian2:8d8a4e2efa6998e424a432e9d4e9 time=12 11:32:55.950 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian1:8d8a4e2efa6998e424a432e9d4e9 time=7 11:32:56.058 (ExecutorParent-8|ExecutorChild-12): REFRESH res=debian2:8d8a4e2efa6998e424a432e9d4e9 time=13 11:32:56.171 (ExecutorParent-8|null): DELETE res=debian1 time=25 ERRORS: 0 CREATE count: 1.0 mean: 45.0 dev: 0.0 UPDATE count: 2.0 mean: 12.0 dev: 0.0 REFRESH count: 8.0 mean: 61.875 dev: 133.86414521820248 DELETE count: 1.0 mean: 25.0 dev: 0.0

One refresh operation lasts 416ms and that is because the session is locked by the other server/thread. There are two threads executing operations in parallel, and, cos it is non-sticky, both servers are processing calls. If one server gets a session but it is locked by the other, internal glassfish implementation tries again a bit later. And that is what is shown here. So, it is working, sessions are locked correctly and it is assured that one server modification does not collide with another. No error is displayed.

Another interesting thing was that the replication configuration produces errors when non-sticky. That means that replication needs some time to be processed from one server to the other (I am really not sure if I have some error in the glassfish setup but I did not find anything). For example:

$ java -cp . es.rickyepoderi.managertest.client.Test \ -b "http://192.168.122.21/manager-test/SessionTest?wsdl" \ -ci 3 -ts 50 -d 10:35:20.192 (ExecutorParent-8|null): CREATE res=debian1:dca46f0af1e07ac1db60e7a8157e time=44 10:35:20.289 (ExecutorParent-8|ExecutorChild-11): REFRESH res=ERROR - debian2 time=12 10:35:20.352 (ExecutorParent-8|ExecutorChild-11): UPDATE res=ERROR - debian1 time=475 10:35:20.877 (ExecutorParent-8|ExecutorChild-11): UPDATE res=ERROR - debian2 time=11 10:35:20.939 (ExecutorParent-8|null): DELETE res=debian1 time=488 ERRORS: 3 CREATE count: 1.0 mean: 44.0 dev: 0.0 UPDATE count: 2.0 mean: 243.0 dev: 232.0 REFRESH count: 1.0 mean: 12.0 dev: 0.0 DELETE count: 1.0 mean: 488.0 dev: 0.0

That means that after the session was created in debian1 it was not retrieved in debian2 (I suppose the session has not been replicated yet), so another session was created (with no attributes, and that was the origin of the following errors). So obviously the use of child threads (threads that execute over the same session) is also erroneously executed in the replication non-sticky environment.

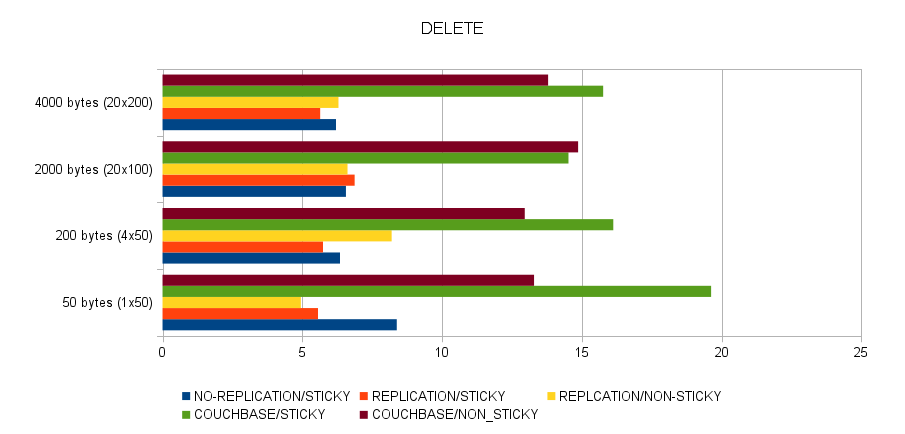

Finally I present some graphics about performance, I tested five different situations:

- No replication and sticky. The manager-test web service application was deployed without distributable tag and was tested again the sticky Apache (non-sticky does not work cos no replication or couchbase is used).

- Replication and sticky. The application was deployed with replication but accessed sticky.

- Replication and non-sticky. Same as before but accessing the non-sticky Apache.

- Couchbase and sticky. The manager-test is configured with the couchbase manager but accessed sticky.

- Couchbase and non-sticky. Same deployment but accessing the non-sticky Apache.

For each situation four different types of session were tested: 50 bytes (1 attribute of 50 bytes), 200 bytes (4x50), 2000 bytes (20x100) and 4000 bytes (40x200). I present the mean time table for the 5 environments and 4 sizes. In the third situation (replication and non-sticky) errors were reported (session is not replicated yet and produces errors). All the tests used 16 threads (-t 16 option), 20 iterations per thread (-i 20, 20 sessions were created and destroyed by each thread), 50 child iterations (-ci 50, each session was accessed, update or refresh, 50 times before deletion) and half a second of sleep time (-ts 500, after each operation the thread slept for 500 ms). The number of attributes (-a) and the size of the attribute (-s) are changed to test the four different types of session commented before. I attach here the spreadsheet with all the numbers (mean and deviation) but the mean graphs are presented below.

Couchbase manager is just a bit slower in refresh and update operations but create and delete operations are worse. Size of sessions does not matter much, in all the scenarios times are more or less independent of the size. Couchbase is very very reliable in non-sticky environments (the other non-sticky environment, non-sticky with replication, is very strange, creation time is enormous and errors are returned, as I said, I am not very confident with this setup). Another curiosity is that couchbase is better in sticky that in non-sticky, although same code is executed in both cases. As a conclusion if I want my manager to be more competitive I need to implement a special sticky property that handles sticky configurations better (saving locks and gets), just in the same way Martin does in memcached-session-manager. That is the following point to implement when I have some time. Nevertheless I am very happy, cos the implementation works well with two servers and times are not excessive.

Stay tuned for more news!

Friday, May 4. 2012

Couchbase Manager for Glassfish: Main Idea Born

I am sure that some of you have already guessed that I am investigating how to create a couchbase session manager for glassfish. In previous posts I studied the memcached-session-manager (a session manager for tomcat) and then you saw me integrating new bundles to glassfish. All those steps were done to implement a new couchbase session manager for glassfish. This post presents the ideas I am following and the first bits of couchbase-manager project which is hosted in git-hub.

The main ideas behind the couchbase-manager are the following:

Couchbase is the responsible of managing sessions (locks, expirations, values,...), the glassfish local sessions rely completely in the external memory repository. This is the axiom for the implementation and it determines all the following ideas.

Sessions will be loaded and saved in every request. When a request comes, the manager usually finds the session, locks it, performs all the stuff and releases it back to the pool of sessions. So now, all that process is exactly the same but using couchbase. Session is found (locally first, if not found couchbase one is got), locked (using getAndLock method), modified and saved (unlock is performed via cas or unlock if session not modified).

This new idea of processing means that local sessions in the internal map cannot be trusted, they only are real when locked. For this reason sessions are cleared (attributes are empty to save space and to test if it is really working) when unlocking.

Besides all the expiration process is also relying in couchbase, all the puts, sets or adds are always done using the expiration time parameter. A session is expired if it does not exist in the external repository. Cos expiration checks are performed anytime during the request lifecycle (not only when session is locked) it is clear that couchbase should be checked everytime, but for saving calls local timestamps are used when not locked (continue reading for details).

So following this ideas the request lifecycle in couchbase manager performs the following steps (I added a little diagram of my ideas):

A request is initiated by the client browser. If the request has a session id (it was created before) the findSession method is called. This method searches the session locally in the internal Map but if the session is not found this id is got from couchbase (the session was created by another server). If not found anywhere null is returned. On the other hand if the session is new and the createSession is called (first request of the client), the session is created and the add method is called. In the couchbase manager this method adds the session in the internal map and also in couchbase.

After the session is retrieved (created or found) the manager is called to lock the session. It is important to remark that until locking the session is not reliable cos, as it is not locked, it can be modified by any other server . There are two lock methods in the manager interface, lockForeground and lockBackgroung, the first one is the method called in normal request processing, the second in internal actions (for example when performing expiration checking task). Besides the foreground method lets several clients to lock the session concurrently (in the same server) and not the background. In any case, the new manager always locks the session in couchbase (accesses from other servers are prevented).

Once the session is locked the session is modified by the request lifecycle (user code manages session). In this stage the session can be modified, read or deleted (invalidated) and implementation assures this server is the only one modifying the session.

If the session is not deleted the unlock process performs the saving in the couchbase. In order to do that an access status of the session is maintained. If the session was modified (dirty) it is saved (cas method is used, session is stored and unlocked in couchbase). If the session is only accessed (local timestamps are refreshed for expiration) the session is touched and unlocked (the pity here is that couchbase client does not have a touchAndUnlock or unlockAndTouch method, so two calls are made). Nevertheless if the session is accessed but not saved for a long time (when a session is touched the internal couchbase expiration is refreshed but not local timestamps of the sessions) a save is executed instead of a touch/unlock.

If the session is manually invalidated the manager is called via remove method. This method removes the session from couchbase (if not already deleted or expired before). The expiration is the most dangerous point of the implementation, the hasExpired method of the session is called everywhere (not only when locked) so, being meticulous, the session should always be checked against couchbase (if the session exists there it still lives, if not it is expired). But, obviously, saving calls is necessary. For this reason if the session is not locked local timestamps are checked and, if session is ok, no access to the couchbase is done (a kind of cache response is done when not expired). There is no high risk cos as soon as the session is locked (it is always locked in a request processing) a real check is done.

Expiration task (a task that manages expiration by inactivity in the internal map) now follows the same idea, the session expiration is first checked locally (internal timestamps) and if it is expired here a lockBackground is performed (it is checked if the session is really deleted in couchbase).

I think the basis are quite simple, but I have experienced several problems to set the manager in glassfish. I summarized here my problems:

In theory glassfish uses an OSGi architecture and a new manager is just a class that implements the PersistenceStrategyBuilder interface. But in reality the SessionManagerConfigurationHelper class limits the manager implementations to the ones that glassfish gives. There is a CUSTOM implementation but this is not thought to be distributable, so I was forced to re-use the coherence-web name (that implementation is a memory repository implementation for the so-named Oracle product).

More or less the same problem happens with the Manager interface. I started extending the ManagerBase abstract class but finally I needed to extend the StandardManager. If the manager is a StandardManager session expiration is controlled by a internal processExpires method. If not you need to implement a PersistentManagerBase (this is managed inside StandardContext class). So finally I decided to extend the StandardManager (some methods are overriden just for cleaning purposes).

My personal opinion is that there is so much noise in the whole manager implementation that it is quite difficult to understand it. A lot of cross calls between manager and session, misunderstanding in what to extend or implement, lots of tests and intensive code browsing are needed to know just where to put your classes and so on. It seems that tomcat and glassfish uses more or less the same classes (I am not completely sure).

Right now couchbase-manager only works with couchbase server 2.0 development preview 4 and current git branch of spymemcached and couchbase Java client (features like lock/unlock are new in all couchbase software).

Finally I present a little video of the current status. The famous clusterjsp is functional in just one server, some attributes are added to the session. Because of using external couchbase server sessions are fully persistent (the session remains after glassfish and couchbase restart).

In summary the implementation is very poor nowadays. I only developed the manager classes and it was only tested in one server and manually (so it is currently very bad tested and, probably, it does not work with two servers). Besides there is another important point, the performance and latency of the manager. It performs always (at least) two calls to the couchbase server (getAndLock and then cas or touch/unlock), in other cases can be more (initial creation or deletion). Besides some snags in couchbase Java client add some extra calls (no touchAndUnlock, no deleteAndUnlock,...). So some performance tests of the manager would be necessary. These two points (add multi-server, non-sticky architecture and performance tests) are in my mind for the next weeks. But I have such little time, you know, I am...

Always outnumbered, never outgunned. ![]()

Comments